Reinforcement Learning · UEC Tokyo · 2026

Building reliable agents under uncertainty.

I'm Zhiqiang He (何志强) — a Ph.D. researcher at UEC Tokyo, working on plasticity, world models, and large-scale RL systems deployed in real environments.

42.8

Highest impact factor (IEEE COMST 2025)

7

Q1 papers across IEEE TMM, PR, InfoSci, CTR

10K

Followers on Zhihu

¥2.2M

JST Next-Generation Researcher (2025-27)

Selected venues · reviewing & publishing

Research pillars

Four threads, one goal: agents that don't break.

Plasticity

Networks that stay malleable when objectives, distributions, and rewards keep changing.

World Models

Learned models that plan, prune, and search — connecting representation to control.

Multi-Agent RL

Scalable, reliable cooperation across many agents — from card games to traffic networks.

Real-System RL

Stable, sample-efficient RL for production — adaptive streaming, networking, control.

Selected publications

Recent work I'm most proud of.

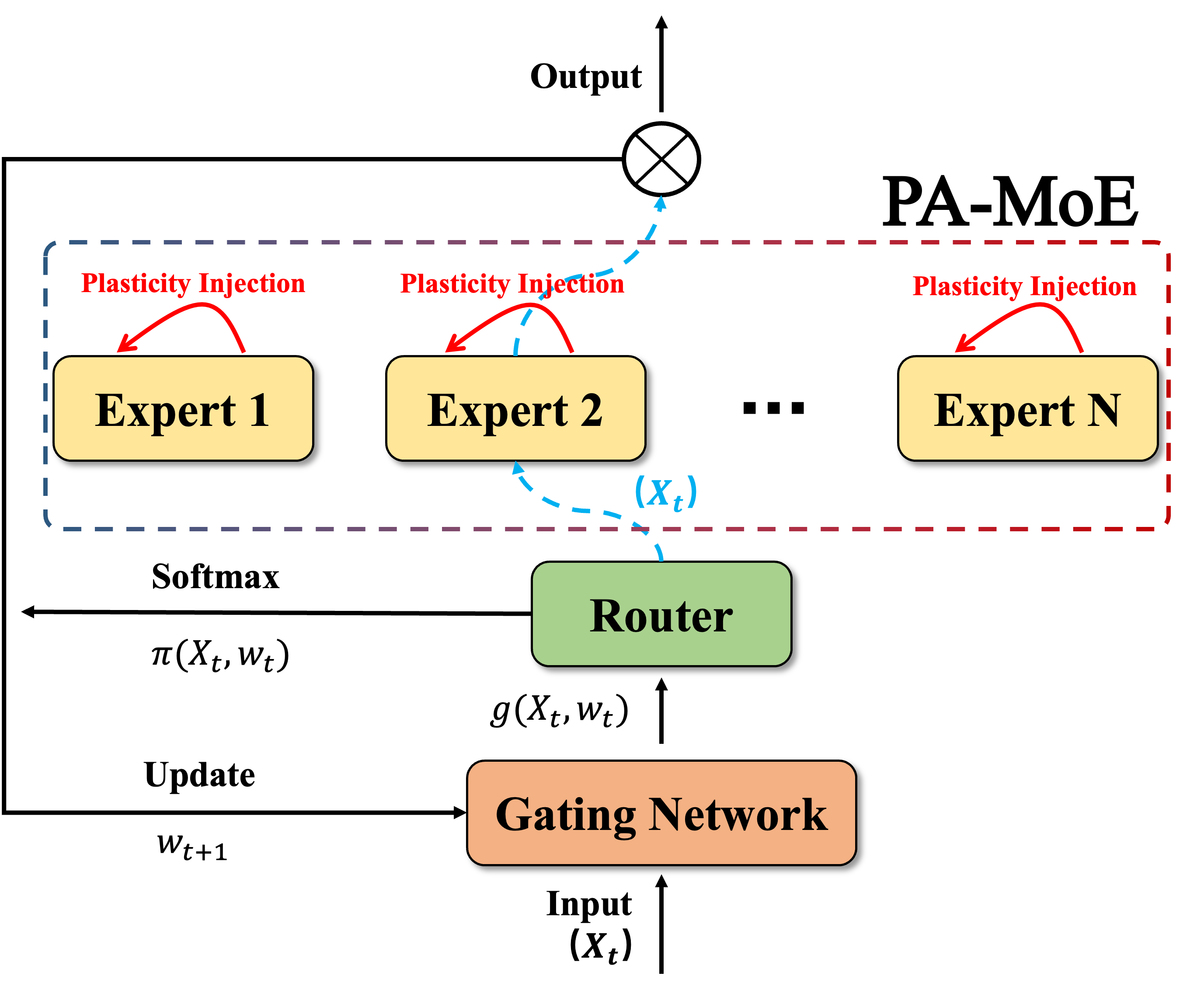

Plasticity-Aware Mixture of Experts for Learning Under QoE Shifts in Adaptive Video Streaming

Zhiqiang He , Zhi Liu

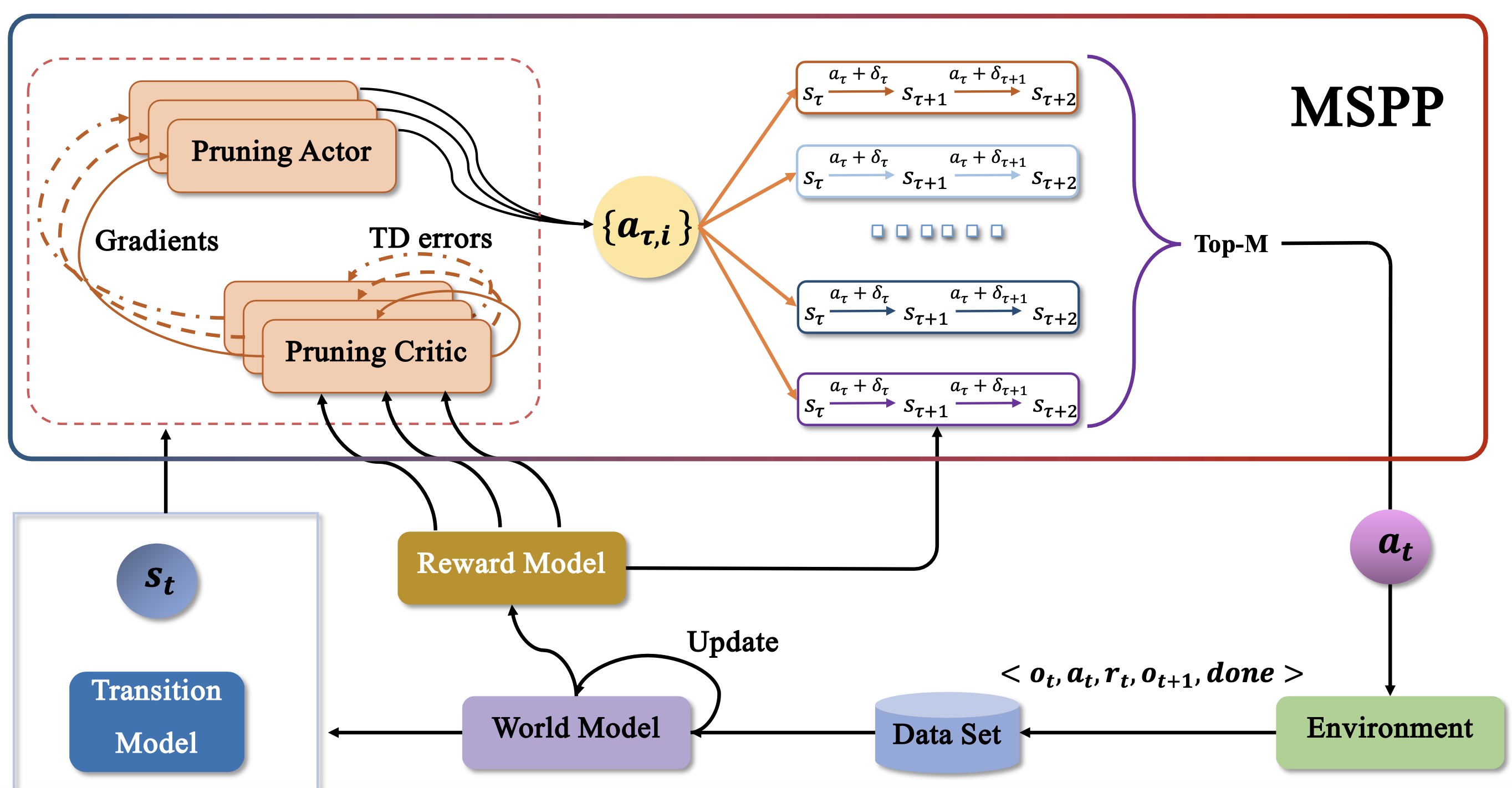

Understanding World Models through Multi-Step Pruning Policy via Reinforcement Learning

Zhiqiang He , Wen Qiu , Wei Zhao , Xun Shao , Zhi Liu

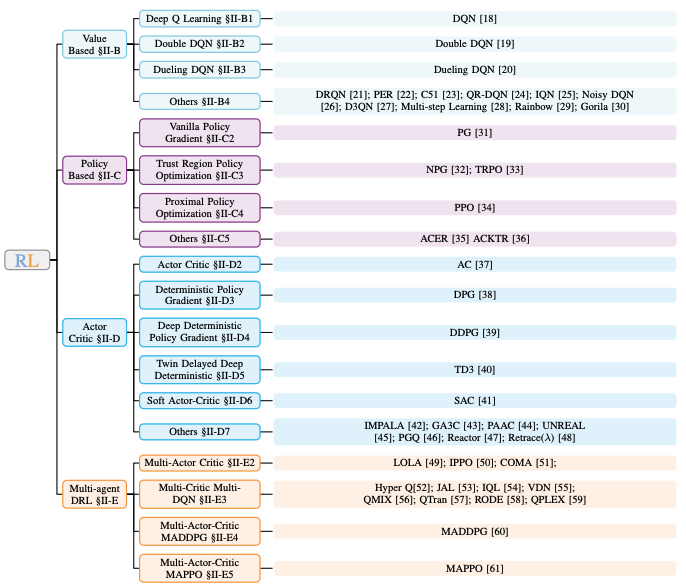

A Survey on DRL based UAV Communications and Networking: DRL Fundamentals, Applications and Implementations

Wei Zhao , Shaoxin Cui , Wen Qiu *, Zhiqiang He *, Zhi Liu , Xiao Zheng , Bomin Mao , Nei Kato

News & milestones

Selected moments.

-

Jan 2026

-

Sep 2025

Works on multi-agent RL for traffic, and DRL-based UAV communications accepted to leading journals.

-

Apr 2025

Recognized as JST Next-Generation Researcher (¥2.2M / year, 2025–2027).

-

Apr 2024

Started Ph.D. at the University of Electro-Communications (UEC), Tokyo with Prof. Zhi Liu.

-

May 2023

Concluded role as Reinforcement Learning Algorithms Engineer at InspirAI (Top-Performing Team Prize).

-

Jun 2021

Joined Baidu (Beijing) as Reinforcement Learning Research Intern — Super Special Offer.

-

Jun 2019

Selected as Outstanding Graduate (Top 1%) at East China Jiaotong University.

Open to collaboration

Let's build agents that don't break.

Always happy to discuss reinforcement learning — data efficiency, stability, large-scale deployment, continual learning. Drop a note with a brief intro.